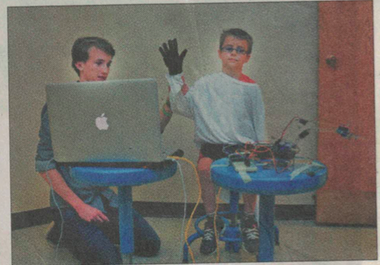

This project combines a robotic arm with OpenCV in order to map arm movements of a human user to the robotic arm. The robotic arm itself has three degrees of freedom and was built using this Instructables document. The gripper of the arm is currently non-functioning (the woes of broken servos).

In order to read human arm movements I used OpenCV-Python to write a script that uses a laptop’s webcam to track a monochromatic, gloved hand. The script has a calibration phase which stores the maximums of the human user’s reach in the directions which the arm can travel (forward/backward, left/right, up/down). Then, it continually reads the center location of the hand (for up/down and left/right motion) and the size of the hand onscreen (for forward/backward motion) and maps these values in relation to their maximums to servo positions.

I did the project as part of the Wooster robotics club. The script can be found on the Github Project Page